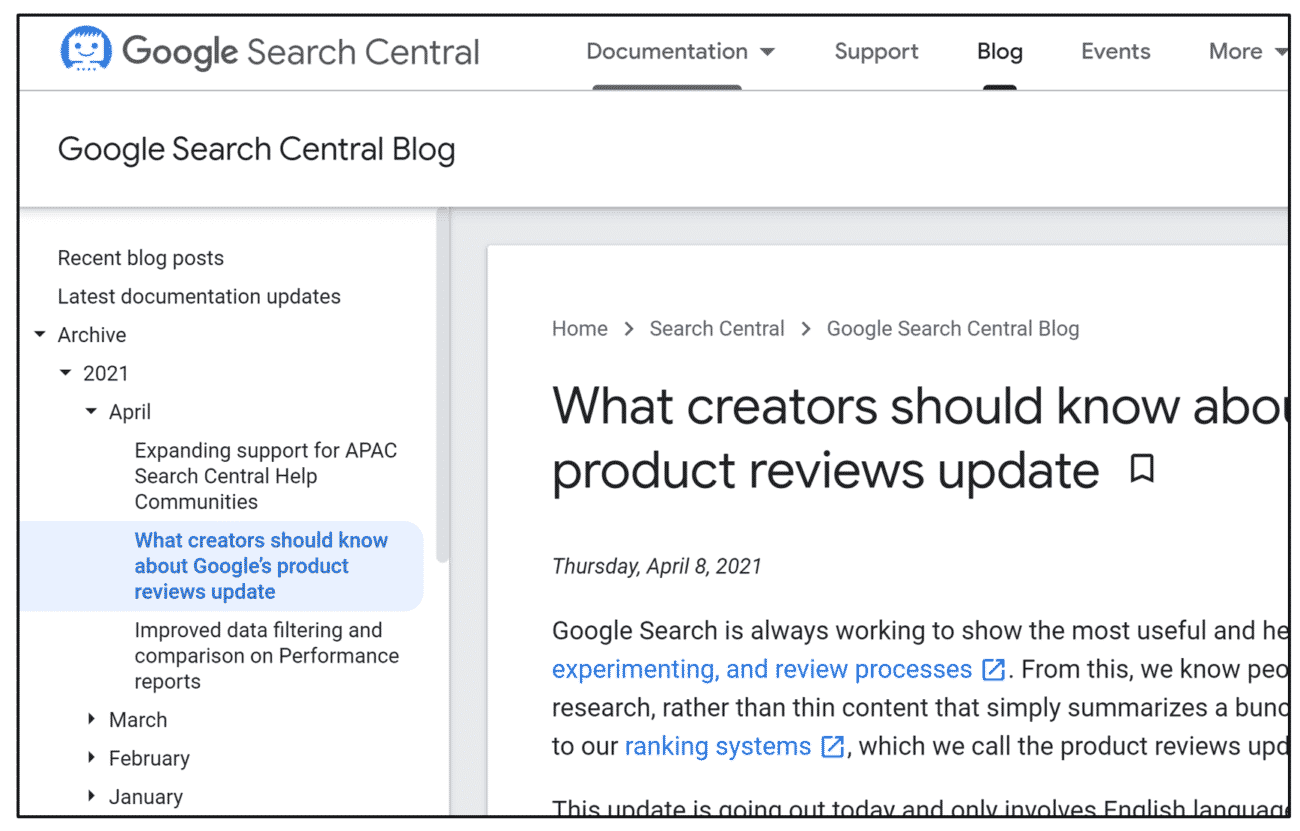

Google Search Central Live NYC 2025 gathered industry leaders, publishers and digital enthusiasts to explore the evolving landscape of search. The event was exceptionally well organised, with over 300 attendees, proving it was a carefully planned conference rather than a side project. Despite a strong security presence, which was understandable given the turnout, the conference… Continue reading

Insights from Google Search Central Live NYC 2025: AI, Policies and Search